Hire engineers who

actually ship.

Agentic assessments that measure how candidates collaborate with AI, so you see how they actually build, not just how they problem-solve in isolation.

We’re not shipping self-serve assessments yet. Book a walkthrough, join the waitlist, or read how /oa works today.

Curious about the candidate and company flows? Read how /oa works.

Hiring for the way engineers work today.

Your best engineers ship with AI in the loop. Most assessments either ban it outright, or allow a stripped-down version and return scores opaque enough that recruiters can't explain what a 100 versus a 200 actually means. Either way, you're measuring the wrong thing.

AI-“cheating” framing

Blocking assistants doesn't remove AI from the equation. It just penalizes candidates who are most fluent with the tools your team actually uses. Your signal should reflect how people perform with their real stack.

AI-fluency framing

Strong engineers steer AI. They scope problems, write precise prompts, verify outputs, and iterate. AlgoArena captures those behaviors so you hire for how people ship, not how they perform without their stack.

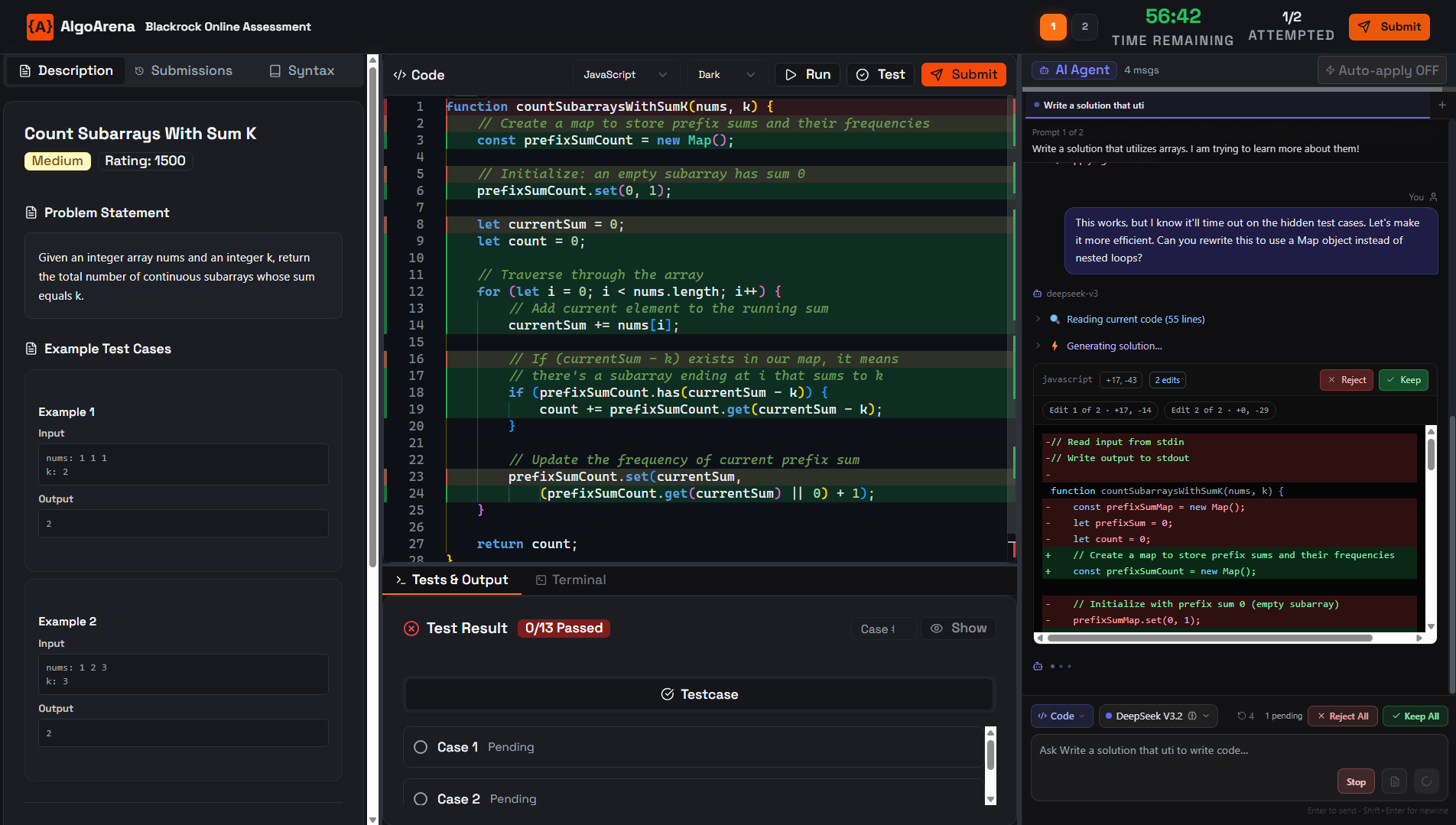

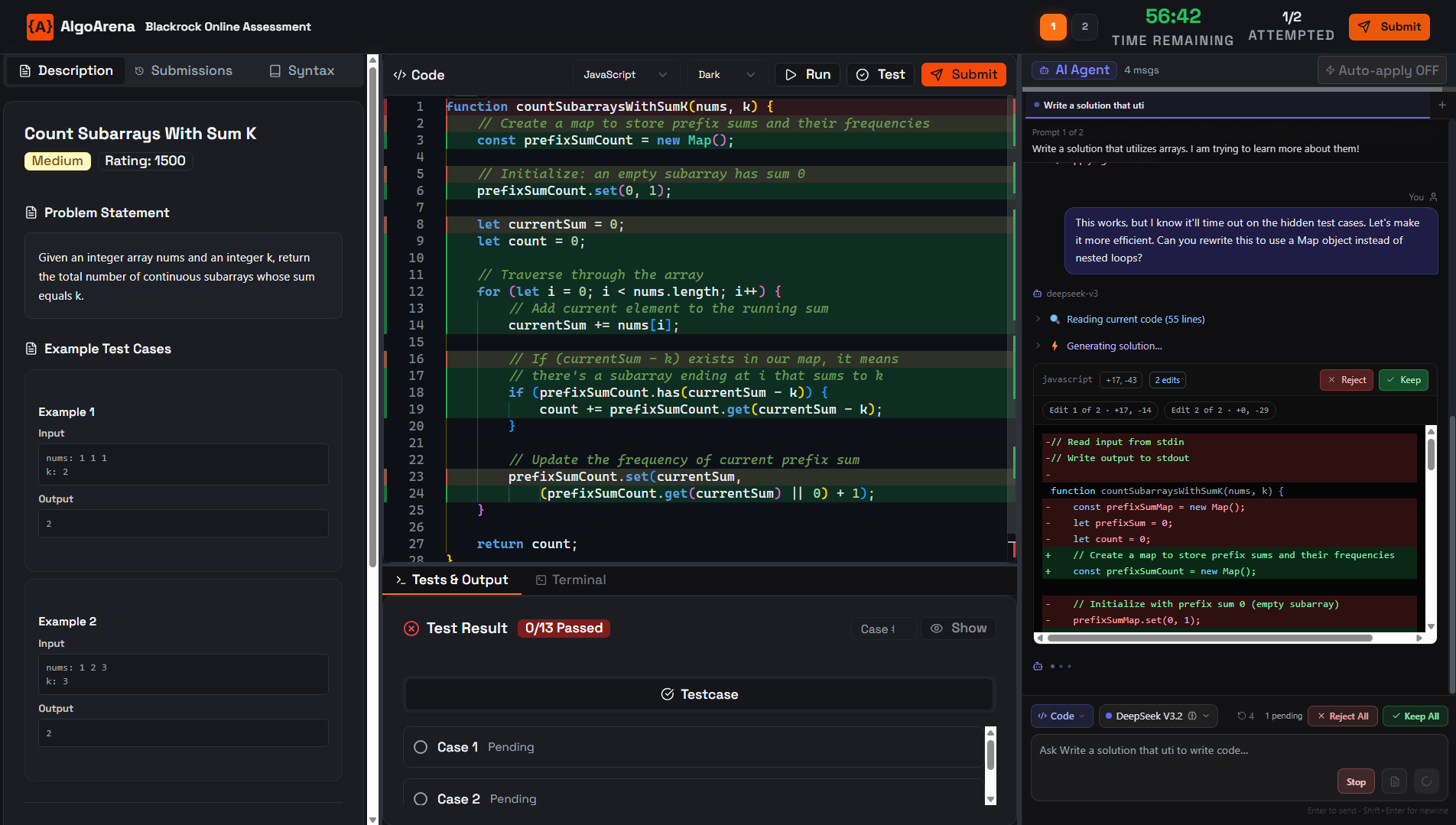

In the reviewer view, open a candidate, scrub the timeline, and see prompts, tests, and decision notes.

Concrete signals we measure

Specificity, context, intent, not just volume.

Refinement vs blind retries, with depth per sub-goal.

Runs after AI edits, error recovery, test discipline.

Efficiency with quality, not speed alone.

Apply-without-verify is a risk signal.

Purposeful model use across plan / code / debug.

Watch them

work.

Go beyond the final score. Watch a full replay of how the candidate approached the problem. See every keystroke, when they tabbed out, and how quickly they recovered from compilation errors, rendered as a scrubbable timeline, not a wall of logs.

Embrace the

AI era.

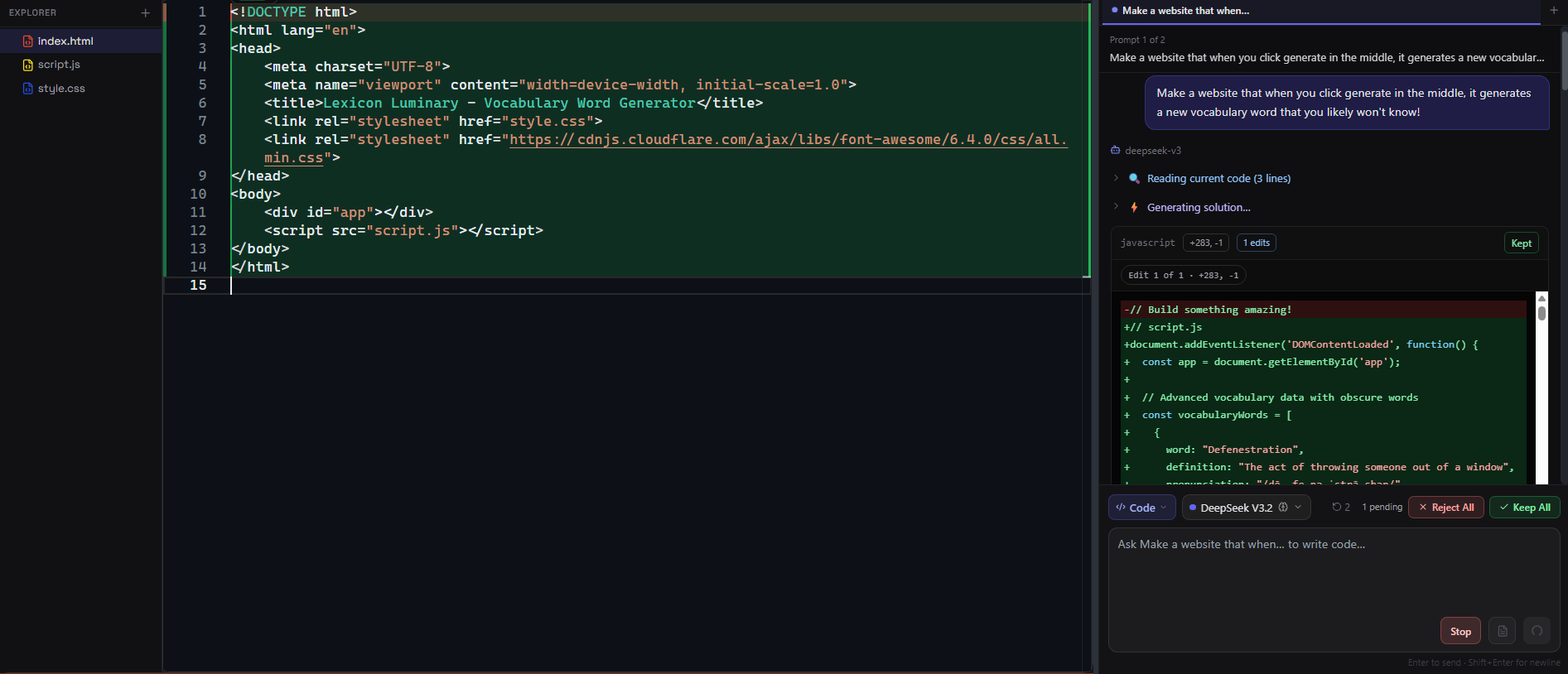

We give candidates a full Cursor-style IDE with four reasoning modes, inline edits, full codebase context, and model selection, then score how effectively they use it. Blind copy-pasters are visible. Thoughtful prompters get credit for the skill they actually have. Every candidate gets the same models, the same context window, and the same time, so the score reflects skill rather than personal API spend.

Move beyond

LeetCode.

Spin up full-stack Next.js or Python environments in the browser and ask candidates to fix a bug in a multi-file architecture, or write a unit test suite from scratch. Assess the work, not the puzzle.

Competitive snapshot

The landscape has shifted. Here's where each tool stands today.

| Capability | Traditional OA |  HackerRank HackerRank |  CoderPad CoderPad |  CodeSignal CodeSignal |  Codility Codility |  AlgoArena AlgoArena |

|---|---|---|---|---|---|---|

| Cursor-style IDE (inline edits, modes, model selection) | ✗ | ~ | ~ | ✗ | ✗ | ✓ |

| Interpretable AI Fluency breakdown | ✗ | ~ | ✗ | ~ | ~ | ✓ |

| Equal model access across all candidates | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ |

| Session replay + AI lineage | ✗ | ~ | ~ | ✗ | ~ | ✓ |

| Code attribution (human / AI / hybrid) | ✗ | ~ | ✗ | ~ | ~ | ✓ |

| Multi-agent orchestration scoring | ✗ | ✗ | ✗ | ✗ | ✗ | ✓ |

✓ fully supported · ~ partial or limited · ✗ not available

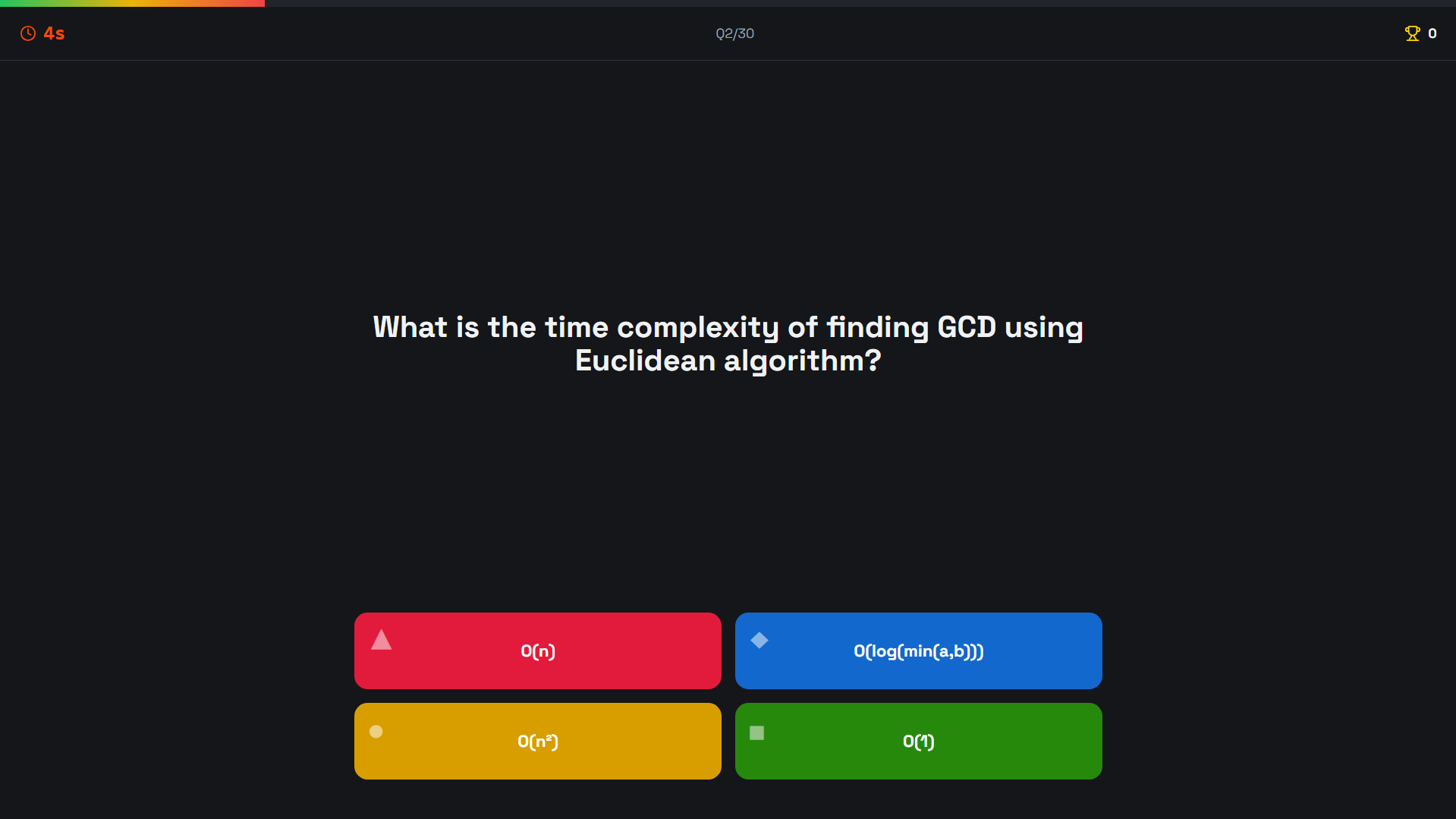

Every OA powers the benchmark.

Every assessment session contributes anonymized data to the industry's first real-world AI coding benchmark, ranking models by how well they collaborate with real engineers under real pressure.

View the BenchmarkIllustrative data

Early access (OA in progress).

We're building AI-native assessments that measure how candidates actually work. If you want a pilot when it's ready, we'll set it up with you.

Questions hiring teams ask

How is this different from CoderPad or CodeSignal?

CoderPad is built for live interviews, not AI-native assessment. HackerRank has an AI assistant in-IDE but scores AI usage as a single opaque grade. Codility's Cody assistant is a chat window bolted onto an otherwise traditional assessment. CodeSignal's AI offering has no inline edits, no planning mode, no model selection, and no directory awareness. Their AI fluency scores are opaque enough that recruiters at major companies have told us they can't explain what a score of 100 versus 200 actually measures. AlgoArena gives candidates a full Cursor-style IDE with four reasoning modes, equal model access regardless of personal API spend, and returns scores with clear, interpretable breakdowns of what was measured and why.

What about anti-cheating?

We use behavior analysis (tab focus, paste attribution, iteration patterns) instead of webcam proctoring. Candidates who game the system exhibit measurably different patterns. That approach is more respectful to candidates than lock-down software on their personal machine.

How much does it cost at scale?

OA mode is still in development. When we open pilots, pricing will scale by candidate volume (not seats) because hiring is bursty.

Can I use my own problems?

Yes. You can pick from the curated library or upload your own multi-file workspace problems. Your content stays yours.

How do I actually get started?

Book a demo or join the waitlist with your company and roles. We’ll line up a pilot as OA mode matures; self-serve authoring is not the default yet.

Hire with certainty.

Join early access and we'll set up a pilot when OA mode is ready.